To paraphrase the old adage, the road to content failure is paved with good intentions. Our latest study of content operations and leadership confirms that while most professionals desire to measure content effectiveness, few are empowered with a system of content intelligence to do so. In this article, we’ll share some insights we’ve gained around the measurement of content effectiveness, and how regularly evaluating content enables more successful operations.

Let’s begin with some background on the study.

About the Study

With our 2021 study on content operations, we set out to document the current state of content operations and to uncover the success factors for content departments. We conducted a survey of 109 content team members and leaders from a variety of industries to better understand what processes drive their success and what their pain points in content operations are. We followed up this survey with a series of interviews to gain additional context and support for our findings.

Within this study, we explored the measurement of content effectiveness in detail. We sought to learn how many companies are regularly evaluating their content effectiveness, and what tools and metrics they’re using to do so. For those companies that are not regularly evaluating their content, we wanted to understand why. Here’s what we found:

Lack of Measuring Content Effectiveness + Success

A wealth of research shows that content decisions informed by data are the most effective. Yet our study found that 65% of content leaders and teams do not regularly evaluate or measure content effectiveness and success.

“One of our challenges is waking people up to the fact that there is value in this technical content, and not just in supporting customers, but value that can be monetized, such as generating sales..” —Survey respondent

By not collecting or analyzing data on their content, these companies risk missing out on opportunities to drive real business growth with their content. To understand why so many companies are not measuring their content’s impact, we asked several follow up questions to understand their challenges in evaluating content effectiveness.

Challenges to Measuring

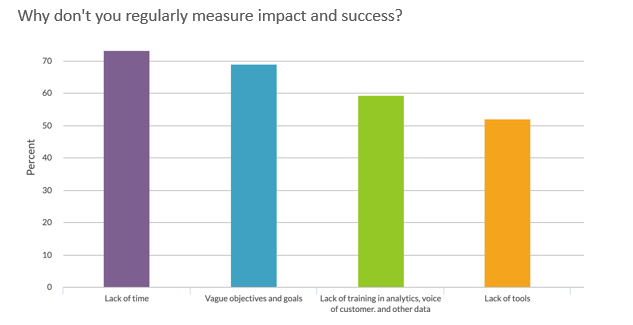

When we asked survey respondents why they don’t regularly measure their content’s success, some of the most common reasons given were a lack of time, vague objectives, or lack of training in content measurement. Many respondents also reported that lack of tools held them back.

Taken together, these challenges reveal that many organizations lack a system of content intelligence to gain insight into their content’s performance. Without clear and measurable goals for your content, it is impossible to adequately measure its performance or know which tools will give you the data to do so. Without the proper tools in place, it’s impossible to empower your team members via training to understand how your content is performing. Without goal-focused data collection or the ability to analyze and interpret that data, it’s impossible to take targeted actions to improve your content’s effectiveness.

To really understand why having a system of content intelligence to gain data-driven insights about content is important, we also examined the relationship between regularly evaluating content and reported success.

Measurement Correlates with Success

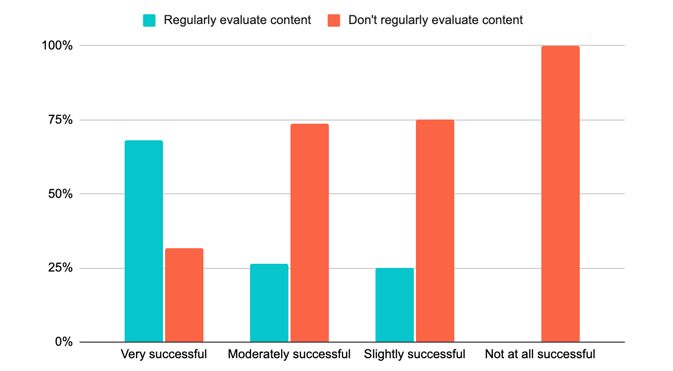

Participants in our study who did report regularly evaluating content effectiveness were much more likely to have achieved success with their content. These teams were more than three times as likely to report very or extremely successful content operations than teams who did not regularly evaluate their content’s success.

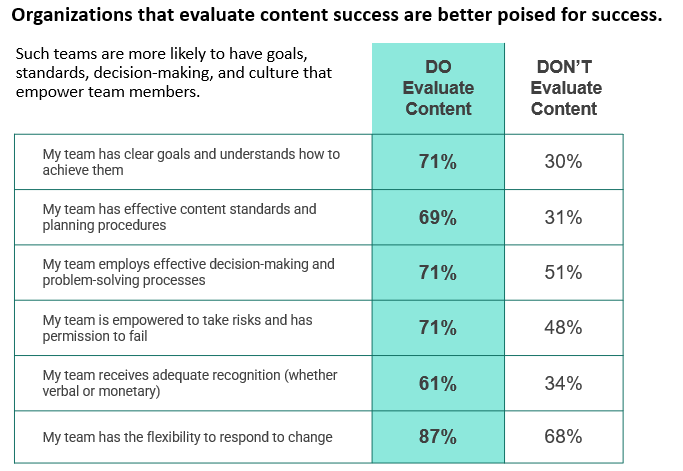

Additionally, we found that teams regularly evaluating their content’s success were more likely to report conditions that better positioned them for success:

“Now the marketing team has realized that they have this asset that is drawing all of this traffic and can be used to generate leads and potentially sales…And this is helping us get buy-in for added value projects.” — Survey respondent

In short, teams that evaluate their content report both higher levels of success and factors conducive to success. This makes it obvious that measuring content effectiveness is a tremendous opportunity to improve the success of your content operations.

What This Means

Our findings clearly reveal that regular evaluations of content effectiveness and ROI help content teams build credibility, perform their jobs more efficiently, and earn recognition for their work. In short, establishing content intelligence in your organization is essential to your content’s success.

If you’re already evaluating content effectiveness, explore ways to make it more efficient and insightful. Review your content’s goals and ensure that they align with your overall business objectives. Also, make sure that your current data collection answers the questions you have about your content’s performance completely.

If you’re not evaluating your content’s effectiveness, it’s worth starting to do so and not too late to catch up.

Measuring your content’s impact and effectiveness is the only way to make data-driven decisions about your content. By proactively planning for content intelligence, you’re taking the first step towards a better understanding of your content and how it can help make a real impact on your business objectives.

Comments

Colleen, Andrew, I remember your past posts as well – on content effectiveness, and I often use your ‘content ROI’ posts as reference for my team and my clients.

When we talk about content effectiveness, sometimes I notice that ‘effectiveness’ means different things to different teams/people. I fully understand how ‘content goals’ should bring different teams together for their expectations and their individual goals for content – I have realized that ultimately, UX guys see content effectiveness in different light when compared to how marketers see it. If you too notice it, how do you address it?

PS: Your posts on content ROI are gold, as I referenced something in my post: https://medium.com/@vingar/content-is-an-approach-content-strategy-is-the-outcome-866b4c32b43c

Comments are closed.

We invite you to share your perspective in a constructive way. To comment, please sign in or register. Our moderating team will review all comments and may edit them for clarity. Our team also may delete comments that are off-topic or disrespectful. All postings become the property of

Content Science Review.