Generative AI has become the most talked-about piece of technology since the birth of the Internet. Its capacity to create text, images, and audio out of nothing but a prompt is a fascinating capability that is likely to only grow in effectiveness and diversity as time goes on.

Already, both broad and purpose-built platforms are popping up like mushrooms after a rainstorm. Some platforms are highly-capable chatbots; capable of holding an, ironically, “real” conversation with a user. Others are purpose-built engines of business, with narrower scopes — but greater efficacy.

Selecting content technology, for any purpose, is a vital task that requires understanding the potential and pitfalls of each option. To gain a better understanding of what’s within scope of usefulness when it comes to generative AI, Content Science tested three of the most prominent AI tools on the market: ChatGPT, Dall-E 2, and AskWriter.

ChatGPT

Arguably the most famous of the triarchy dominating the AI field, ChatGPT is a broad-scope Natural Language Processing (NLP) tool that generates text in response to a prompt.

Open AI trained ChatGPT on enormous volumes of publicly available data to develop an AI capable of mimicking human speech patterns, while remaining on topic.

ChatGPT is undoubtedly an impressive, powerful piece of technology that will have a significant impact on the world as a whole. However, in its current state, ChatGPT is of limited use to content professionals working in a business setting.

Let’s look at the tests conducted by Content Science. We tested ChatGPT in three different ways; with each test increasing in difficulty. We administered every test 10 times to evaluate any changes and to account for any ‘hiccups’.

Test #1

Difficulty: Easy

Our prompt: ‘In 200 words, what is ChatGPT?’

We asked ChatGPT to describe itself with only one parameter – the description had to be 200 words in length. In content, ChatGPT excelled. It succinctly and accurately described how it works and its uses. However, mechanically, ChatGPT failed to adhere to the 200-word limit, 50% of the time.

Test #2

Difficulty: Medium

Our prompt: ‘How can you measure content effectiveness?’

This time, ChatGPT delivered correct, but erratic answers. Each time we gave the prompt, ChatGPT gave a numbered list of content effectiveness metrics, with the metrics changing each time. All of the metrics included were correct but hardly unique or comprehensive.

Additionally, the content of the pieces produced by ChatGPT had huge amounts of variation, so you could never be sure if all the correct material was being covered.

So, whilst nothing ChatGPT produced was ‘incorrect’, its genericism made it ineffective content. The information it generated was surface-level and repeated the same points found in countless articles already published. As a tool, it therefore seems ill-equipped to create a substantial article, even where there is plenty of information available to draw upon for it.

Test #3

Difficulty: Hard

Our prompt: ‘What are the levels of content maturity?’

This test was difficult for ChatGPT because the prompt was short but vague. Additionally, ‘content maturity’ is not as well known a term as ‘content effectiveness’, so there was less information online for it to pull data from.

In the first test, ChatGPT surprisingly got the idea correct; giving us a result that was roughly parallel to Colleen Jones’s own content maturity model.

Across seven tests, it provided an outline of ESRB and MPA ratings, explaining the reasoning behind rating something “E” or “M” or “PG.”

And, in another two tests, ChatGPT tried to tackle our query by explaining different age groups and what rating of content was appropriate for them.

We found that, even after fine tuning the prompt to be more specific, ChatGPT struggled to truly understand what the user was searching for. It seemed unable to get past the terms “content” and “maturity” being used in the same prompt.

Our Verdict on ChatGPT

Ultimately, ChatGPT is a chatbot and, in this sense, it excels. Asking ChatGPT questions like “How are you doing?” or “What did you think of Revenge of the Sith?” produced logical, fully-formed answers that could easily have come from a real person.

It was unable to produce rock-solid articles at the click of a button, but we would say that ChatGPT still holds some value for the content industry. Whilst the overall structuring of articles is weak, it’s still able to pull facts from the Internet with speed and accuracy. Its content would still need human review, but ChatGPT could possibly cut down on someone’s research time where there is a need for short, informative articles.

ChatGPT also proved to us that it was very capable of producing taglines, titles, and other eye-catching pieces of text. We saw that the titles generated by ChatGPT were always on-topic, and we were surprised at just how creative they could be.

Dall-E 2

Dall-E 2 is another generative AI created by OpenAI. Training itself based on the countless images available publicly on the Internet, Dall-E 2 is able to create startlingly effective images based on prompts such as “yellow bird with a blue beak” or “10 people smiling at a picnic”.

The value of Dall-E 2 is largely dependent on the goals of the organization using it. For a massive, Fortune 500 entity with highly-specific brand guidelines, imagery, and marketing goals, Dall-E 2, we would wager, would be largely useless. It won’t, for example, be able to use the specific shade used throughout a company’s branding assets, and it won’t be capable of creating consistent-looking graphics.

However, for a small business with little money to spend on creative services, Dall-E 2 holds far more value.

For example, by handing over $15 to Dall-E 2, a customer will receive 115 credits.

It costs one credit to generate images from a prompt, and each generation from a prompt produces four images. Therefore, $15 will get you 460 images.

Once again, Content Science tested the quality of Dall-E 2 with three separate prompts; each administered 10 times. Each prompt resulted in a total of 40 images produced.

Test #1

Difficulty: Easy

Our prompt: ‘Generate a logo for a hamburger food truck’

The graphics for the logos produced were effective and on topic. Many of the images combined elements from the words ‘truck’ and ‘hamburger’ to create very bespoke graphics. However, the line work on these images was erratic — sometimes appearing with perforated or spiked edges. It means that a human creative professional would have had to edit the work produced by Dall-E 2 before it was really ‘usable’.

On top of this, we noticed that the copy accompanying the graphics was universally misspelled and sometimes even nonsensical (with random non-English characters appearing in many cases).

Test #2

Difficulty: Medium

Our prompt: ‘How to make good content’

We found that the images Dall-E 2 created for us were accurate and on-topic, but too generic. Nothing produced by Dall-E 2 using this prompt seemed any better or more unique than the royalty-free images you can easily find online.

The images produced by Dall-E 2 also seemed to cling to the idea that it was a business-related term; meaning that it consistently produced images with this in mind, rather than creating anything more specific to ‘content’ itself.

Test #3

Difficulty: Hard

Our prompt: ‘Generate a graphic for content maturity’

We felt this was a niche ‘ask’, focusing on a term first coined by Content Science’s founder, Colleen Jones.

Dall-E 2 proved unable to generate anything related to the topic, creating a series of images depicting older people holding charts. For something so specific to the content industry, Dall-E 2 was utterly incapable of producing any worthwhile material.

Our Verdict on Dall-E 2

Images created using generative AI like Dall-E 2 appear to not be as useful in comparison to textual content generated by generative AI.

And whilst image-related generative AI can be just as broad in scope and capability as text-related generative AI, it is far less consistent, and requires substantially more human oversight.

AskWriter

AskWriter is an NLP tool, just like ChatGPT. However, the difference here is that AskWriter is purpose-built for professional writing needs. AskWriter is part of a suite of offerings and tools included in Writer’s larger overall platform. That means that AskWriter also has access to the data and users of Writer’s other offerings.

AskWriter uses a proprietary Large Language Model (LLM) that draws its intelligence exclusively from business and professional writing. Therefore, AskWriter is unable to execute chatbot tasks, like ‘discussing a new movie’, but seems to be hugely better at providing professional, informative content.

AskWriter is significantly more customizable than ChatGPT too. You can select and create templates, describe a desired call to action, and even indicate the new copy’s end purpose, such as it being used for external communications, marketing purposes, or as part of a recruitment campaign.

Content Science tested AskWriter with three prompts of increasing difficulty, with each prompt administered 10 times.

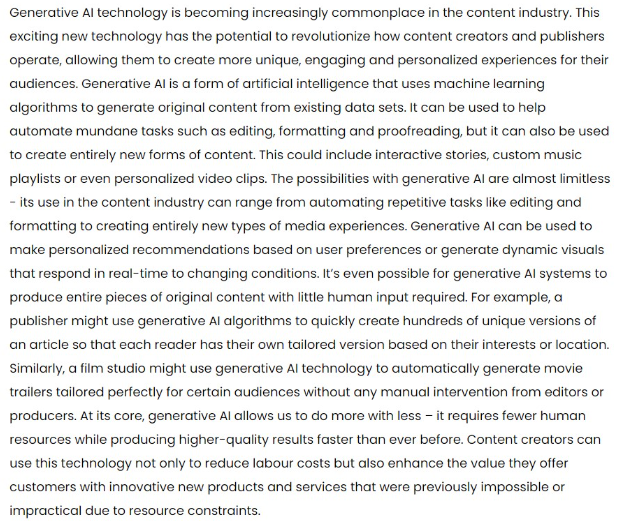

Test #1

Difficulty: Easy

Our prompt: ‘How can generative AI be used in the content industry?’

The article produced by AskWriter in response was adequate and full of relevant information that flowed in a very readable way. While it was not exactly comprehensive, the article produced by AskWriter was sufficiently able to outline several uses of generative AI in the content industry, and made some sound arguments.

Test #2

Difficulty: Medium

Our prompt: ‘How was IBM’s Watson responsible for generative AI?’

Again, AskWriter’s performance exceeded expectations. Rather than generalizing cause-and-effect, AskWriter established a clear history and then made sound arguments based on that history.

What astonished us most was AskWriter’s use of tone. The structure and use of active voice throughout the sample conveyed a real sense that there was a “passion for the subject” present.

Test #3

Difficulty: Hard

Our prompt: ‘To what extent was Jimi Hendrix responsible for the popularization of rock and roll?’

We’ll be honest, we set AskWriter up to fail with this prompt(!). It’s a prompt rooted in a very nebulous idea; one which could have a hundred different arguments. But, after seeing how well AskWriter performed with business-focused questions and prompts, we wanted to see how it would react to a completely different subject.

And AskWriter didn’t disappoint. The article certainly wasn’t on par with anything produced by a music historian, but the facts it chose to present were true, the arguments were solid, and the article structure was impeccable.

Even in additional tests where we changed the criteria and forced AskWriter to include seemingly irrelevant facts, the platform was still able to produce a passable piece of content.

Our Verdict on AskWriter

Overall, AskWriter has very real, tangible value for enterprises. AskWriter can’t be the final voice in text content for a business, but it can absolutely create a lump of raw content that an editor then refines into something useful. The customizable nature of AskWriter is the source of its real value and gives it the flexibility a generative AI needs to be effective in the business world.

Comparison of Generative AI Tools for Content Professionals

| Tool | Pros | Cons |

|---|---|---|

| ChatGPT | ||

|

|

|

| Dall-E 2 | ||

|

|

|

| AskWriter | ||

|

|

|

Should Your Organization Use Generative AI Right Now?

Generative AI is a rapidly improving field of technology that, while unable to compete with professionals today, can be a powerful tool and might become a trusted partner in the near future.

We see the generative AI of today as a helpful jumping off point for content professionals. The tools can remove some of the monotony and repetition associated with certain creative tasks, which frees up your living, breathing professionals to focus on what they do best. However, even in this capacity, some AIs are better than others.

As generative AIs develop, multiply, and become more technologically sophisticated, we will see more AIs operating in narrower, more specific scopes. And this is a good thing. For example, we can already see that — compared to ChatGPT — AskWriter seems better suited to business contexts.

We also anticipate other content tools and platforms, such as content management systems, will start to add or integrate generative AI capabilities. As our Content Technology Landscape indicates, many are already using some type of AI already, so incorporating generative AI is a logical progression.

When considering whether your organization should use a generative AI, it’s important to keep your current level of content maturity in mind. Content maturity is often one of the most powerful deciding factors for incorporating any sort of new technology into your content production process. If you are unsure about your organization’s level of content maturity, check out our content operations maturity assessment and find out.

It’s time for organizations of all sizes to experiment with generative AI so they will be well-poised to make the most of its benefits.

Events, Resources, + More

New Data: Content Ops + AI

Get the latest report from the world's largest study of content operations. Benchmarks, success factors, commentary, + more!

The Ultimate Guide to End-to-End Content

Discover why + how an end-to-end approach is critical in the age of AI with this comprehensive white paper.

The Content Advantage Book

The much-anticipated third edition of the highly rated book by Colleen Jones is available at book retailers worldwide. Learn more!

20 Signs of a Content Problem in a High-Stakes Initiative

Use this white paper to diagnose the problem so you can achieve the right solution faster.

Comments

We invite you to share your perspective in a constructive way. To comment, please sign in or register. Our moderating team will review all comments and may edit them for clarity. Our team also may delete comments that are off-topic or disrespectful. All postings become the property of

Content Science Review.